SOA and the Mainframe: Two Worlds Collide and Integrate

In the context of Legacy Modernization, an SOA Integration architecture can bring a legacy environment into the world of the World Wide Web, Web 2.0, and all the other latest Internet-based IT architectures.

Like it or not most mission critical processing still happens on a mainframe today. Mainframes literally run the business world.

Unfortunately, most companies with systems older then ten years are starting with an architecture that is appropriately called the Accidental Architecture. This architecture resembles a bowl of spaghetti. Each strand of spaghetti representing a system, and all the overlap in the spaghetti strands representing integration points between these systems. This accidental architecture was developed over years and includes Remote Procedure Calls (RPC), FTP, message queues and other forms of integration. Therefore, it is not easily understood and difficult to just replace or re-write.

So, why do you care about these outdated, primitive mainframe systems that are brittle and difficult to work with. You are a Java/J2EE developer using web services, BPEL process managers and ESBs to achieve you job on a daily basis. According to a survey by the Software Development Times “…mainframe applications show up in 47 percent of SOA applications…”. This means if you are involved in an SOA project there is almost a fifty percent chance you will have to ‘deal with’ a legacy mainframe system.

SOA can be over hyped and has been, in some cases, exploited by IT vendors as the 'holy grail' of software architecture. However, in the context of Legacy Modernization, an SOA Integration architecture can bring a legacy environment into the world of the World Wide Web, Web 2.0, and all the other latest Internet-based IT architectures. Within days, a legacy system can be accessed via a web browser. This is one of the biggest advantages Legacy SOA Integration has over other types of Legacy Modernization. Your time-to-market is weeks, instead of months or years. We now live in a world of short attention spans, and instant gratification; we don't read the morning newspaper but get it on the Net. So our modernization journey needs to reflect these changing times. This is why SOA Integration is most often the first phase of any legacy modernization project.

Legacy SOA Integration: Possible Mainframe Integration Points

Before we get started, we need to understand the different ways you can SOA enable your mainframe system. We can enable a number of different legacy artifacts. The legacy artifacts are the actual pieces of logic, screen, or data that reside and are processed on the mainframe. The legacy artifact is what the business users want to get to. Let’s not only look at each possible integration point, but the why you would choose one Legacy artifact/access method over another:

- Presentation-tier— This is what is commonly referred to as the ‘green screen’. This is a big, bulky dumb terminal that was the really only way to have a two-way interaction with the mainframe system. This refers to mainframe 3270 or VT220 (DEC) transmissions, iSeries transmissions (5250), and others.

Why choose presentation-tier over applications, data, and other? The simple answer is that none of the application source is available. Other reasons may be that the data stores cannot be accessed directly because of security or

privacy restrictions, or that no stored procedures or SQL exist in the

application. SOA enablement of the mainframe application is as simple as running and capturing the screens, menus, and fields you want to expose as services. This is fast, simple, and for the most part, easy.

- Application—Application service enablement is more than wrapping transactions as web services. This is all about service enabling the behavior of the system, and includes CICS/IMS transactions, Natural transactions, IDMS and ADS/O dialogs, COBOL programs, and batch processes. But it also includes the business rules, data validation logic, and other business processing that are part of the transaction.

Why application-based legacy SOA? The application is at the core of most systems. The application contains the screens that are run, the business logic, business rules, workflow, security and the overall behavior of the legacy

systems. Transactions on mainframe systems are the way that IT users

interact with the system. So using the application layer makes the most

sense when you want to replicate the functionality that the legacy system is currently using. This approach allows you to leverage all the behaviors (rules, transaction flow, logic, and security) of the application without having to re-invent it on open systems.

- Data—The data can be relational or non-relational on the legacy system. In most cases, the legacy system will have a non-relational data store such as a keyed file, network database, or a hierarchical file system. While accessing data in a legacy system, the SOA integration layer will use SQL to provide a single, well-understood method to access any data source. This is important as some organizations will prefer to have SQL-based integration as opposed to SOA-based data integration. The IT architect may decide that having a SQL statement in an open systems database is easier than putting in place an entire SOA infrastructure.

Why data? This is ultimately the source of the truth. This is where the

information that you want is stored. If you are using any of the other three artifacts , these methods will ultimately call the data store. So, it seems very reasonable that most of your services act directly against the data. Sometimes, security and privacy concerns will not make this possible. Sometimes, the data needs to have business logic, business rules, or transformation applied before it is valid. However, if these things are not applicable, going right to the data source is a nice way to proceed.

- Other—Stored procedures and SQL are the way most distributed applications get results from a data store. Stored procedures also provide major benefits in the areas of application performance, code re-use, application logic encapsulation, security, and integrity.

Why stored procedures and SQL? You are making a move to distributed, open systems, and relational databases so you should use technologies that work well in this environment. There are also the people and skills aspect. Your open systems developers will be very familiar with stored procedures, and find them easy to develop.

Legacy SOA Integration — Four Typical Use Cases

It has been said a number of times in the technical journals and by IT analysts that SOA is not a product or solution but a journey. If SOA is a journey, then Legacy SOA modernization is a journey where you 'pack extra clothes'. This is because your Legacy SOA modernization journey will take unexpected twists and turns, and you will be going to unexpected places as business objectives, key personnel, and technologies change during the journey.

We will use a set of common Legacy SOA situations we have come across, as we help you on your journey to a modern IT platform. We will then use a design pattern that we have found successful for each use case.

Use Case One — Legacy SOA Enterprise Information Integration (EII)

Also known as: Data Integration, file sharing, file messaging

Problem

My current mainframe-based infrastructure for information integration is fragile, expensive, and hard to maintain.

The problem is often characterized by issues with no common work-flow approach, lack of data quality, and data profiling capabilities, customized transformation logic unique to each data feed, lack of real time monitoring capability, and inability to quickly add new data feeds.

Context

Data needs to be shared with new systems developed on open systems and other mainframe systems, both internal and external to the company and/or the organization we work for.

Almost all mainframe systems have data feeds coming into them or going out of them. These data feeds are typically controlled by a job scheduling system and run during nightly batch cycles.

Forces

Applications and organizations were standalone in the past. Now there is a big need to share information between application and businesses.

Solution

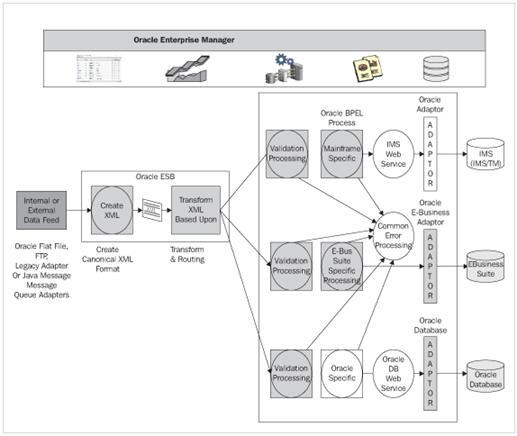

Architecture Summary

The objective of Legacy SOA Integration is not to disrupt the current business processing and legacy system. We hold true to this by keeping the data feed the same. Attempting to perform even minor changes to data feeds are near impossible because:

- If a third party or even an internal organization outside your control is involved, the ability to get the change completed can take months.

- Even internally owned data feeds impact the source systems, current processing, and target systems so that even a small change has a ripple effect that causes the change to take months.

The current data feed will remain and download FTP to a directory and will most likely remain batched. The diagram shows technologies like Legacy Adapters and Oracle Messaging as these can be adopted when changes to business processes

are made.

- Oracle ESB—The Oracle ESB will use the file or FTP adapters to read the flat file and then transform the flat file to a common (canonical) XML file format. Based on the source of the data feed, the message will be routed to the appropriate Oracle BPEL process.

- Oracle BPEL—This is where the workflow and processing that we discussed earlier comes in :

- Oracle BPEL will call a Java or Web service process to perform any validation processing. This validation processing will probably call out to the Oracle database to validate information based upon the data in the database.

- After validation, file type-specific processing takes place. This is basically ‘business rules’ being applied to the input data file. This business processing could call the Oracle Rules engine (we will leave this topic to Chapter Six).

- Common Error Processing—Validation and/or business rule processing errors will be passed to an error-handling route. The BPEL worklist will be populated, so a human may correct the problem file or records.

- Data persistence Web service—The data will be persisted in the Oracle database, IMS database, and/or the Oracle Ebusiness Suite.

Use Case Two — Legacy SOA Web Enablement

Also known as Screen Scraping or Re-interfacing.

Problem

My customer support people, sales representatives, customers, and partners would like to access our system through the Web. Why can't I have one interface to update both my legacy system and Oracle system?

Old 'green screen' technologies have many limitations. A big one is that they are not very intuitive. You have to access several screens or systems to get the information you need, and they are is no 'point and click'. In addition, many times, users may have to go to multiple systems to either query or update the same or similar data.

Context

Users want their data now, wherever they are, and at any time of the day or night. Users also want their legacy systems and new Oracle environments to work together.

A business process that requires a user to have to query multiple systems, and then update multiple systems makes everything move slower, and in many cases may cause data inconsistencies.

Forces

We are seeing the ranks of users that were online and on the web at a very young age. Technologies providing better application interfaces have been in the market place for years.

Solution

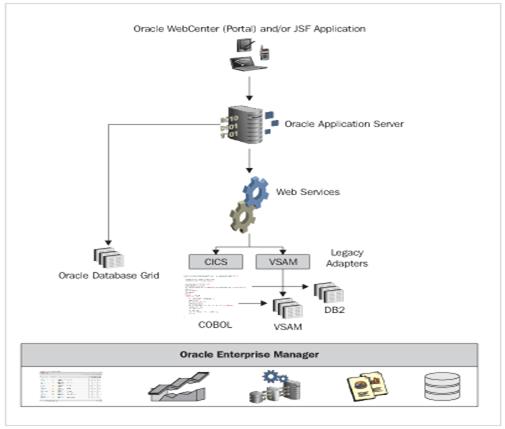

Use Case Two Architecture Summary

The key to this use case is the interface. Therefore, Oracle WebCenter and/or JSP/JSF will be used. For the first iteration of this use case, JSP and/or JSF can be used to keep development simple and deployment quicker. A more sophisticated interface would be to use JSF to develop JSR-168 portlets, and deploy them using Oracle WebCenter or similar technology.

Use Case Three — Legacy SOA Report Off Load Using Data Migration

Also known as: Data migration, Legacy Operational Data Store, Reporting Modernization, Business Intelligence Consolidation

Problem

IT Perspective—My legacy reporting infrastructure is costing me millions to run and I have a six month back log of report requests.

User perspective—I already have 100 green bar reports but I still cannot make business decisions with the information I have.

Context

Users need access to information in a variety of formats and dimensions. They also need to be able to easily do 'ad hoc' and 'what if' scenarios.

It is not uncommon for a mainframe-centric organization to have strategic sales forecasting to be done on all spreadsheets.

Forces

Doing reporting on the mainframe is expensive, and business users cannot get access to the information they need to make decisions. So a typical organization will find that users have created their own reports using Excel, SQL, and other desktop tools. Data then gets duplicated throughout the enterprise.

Solution

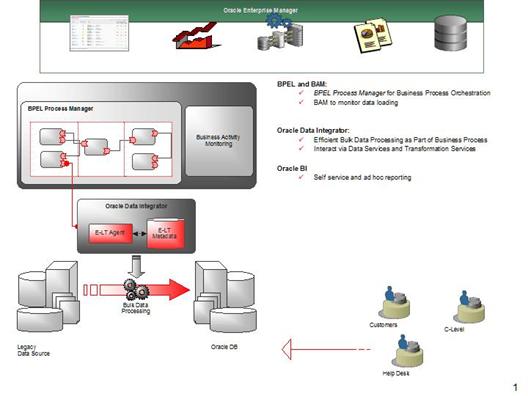

Use Case Three Architecture Summary

In Oracle BAM, both the end user decision makers and IT management see the real time flow of information into the reporting system. End user decision makers can make real time decisions based upon the most current information. IT management can get immediate alerts if a data load is running slow, the amount of data being loaded each minute, or the average response time of user ad hoc reports.

BPEL is used to orchestrate the flow of information into the Oracle database. BPEL can be used to schedule data loads based upon the time of arrival, or the arrival of a specific file or by constantly looking for the data extracts

to load.

Oracle Data Integrator provides a fast method to bulk load data into the Oracle reporting database. It provides access to data transformation, data cleansing, and data management services. As ODI is fully Web service-enabled, any ODI component can be consumed by Oracle BPEL, Oracle ESB, or any other WWeb service-enabled tool or product.

Use Case Four — End-to-End SOA

Also known as: Software as a Service, Legacy SOA Integration

Problem

My legacy system is a 'black box'. Getting information into it is painful and getting information out is worse. I also have no idea of the business processes that run my business.

Context

Your mainframe system does not reflect how business is done today. The legacy system is difficult to maintain, enhance, or roll out out new services (product offerings) to internal and external customers.

Forces

The user community demands information to be processed in real time and results to be available immediately. Information integration interfaces into the system are changing every week, and new trading partners wish to interface with you business in days not months. The system interface needs to be personalized, so internal power users see all the information, internal sale people only see sales data pertinent to them, customers only see their data and orders, and company executives have a real time insight into the business as it stands right now, and not what it looked liked three weeks ago.

Solution

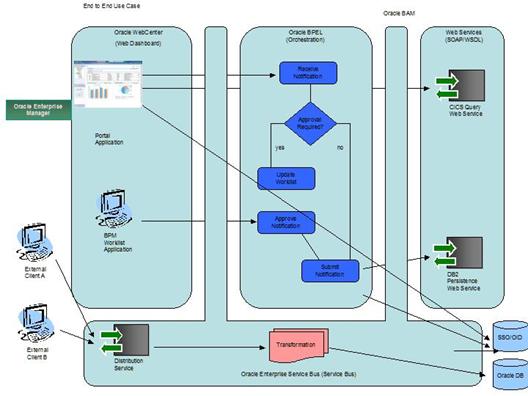

Use Case Four Architecture Summary

Since we want to offer user-personalized views into the system, Oracle WebCenter or similar technology is the easiest and quickest way to do this.

BAM plays a big role here, as the amount of processing increases. BAM will make it much easier to monitor all these business process and services in one screen.

Just as the ESB was used to help migrate data from other systems and provide an integration bus earlier, ESB is used here to accept two different input file formats and process them in a single workflow.

Summary

According to the Aberdeen Group: "Organizations that are SOA-enabling their legacy applications on the legacy platform are outperforming those that are using any other approach. They report better productivity, higher agility, and lower costs for legacy integration projects". This is one IT analyst's perspective but it does highlight the importance of SOA integration in any company's legacy modernization strategy. This is because rather quickly (in months not years) Legacy SOA Integration can bring an organization into the 21 st century. It also provides the self-service customer interaction, consolidated business processes and a much more agile IT infrastructure.